现代化Facebook群组搜索,释放社区知识的力量

Engineering at Meta

·

Log4Shell的教训:构建符合CRA要求的Log4j

The Apache Software Foundation Blog

·

使用HolmesGPT和CNCF工具自动诊断Kubernetes警报

Cloud Native Computing Foundation

·

片段:4月21日

Martin Fowler

·

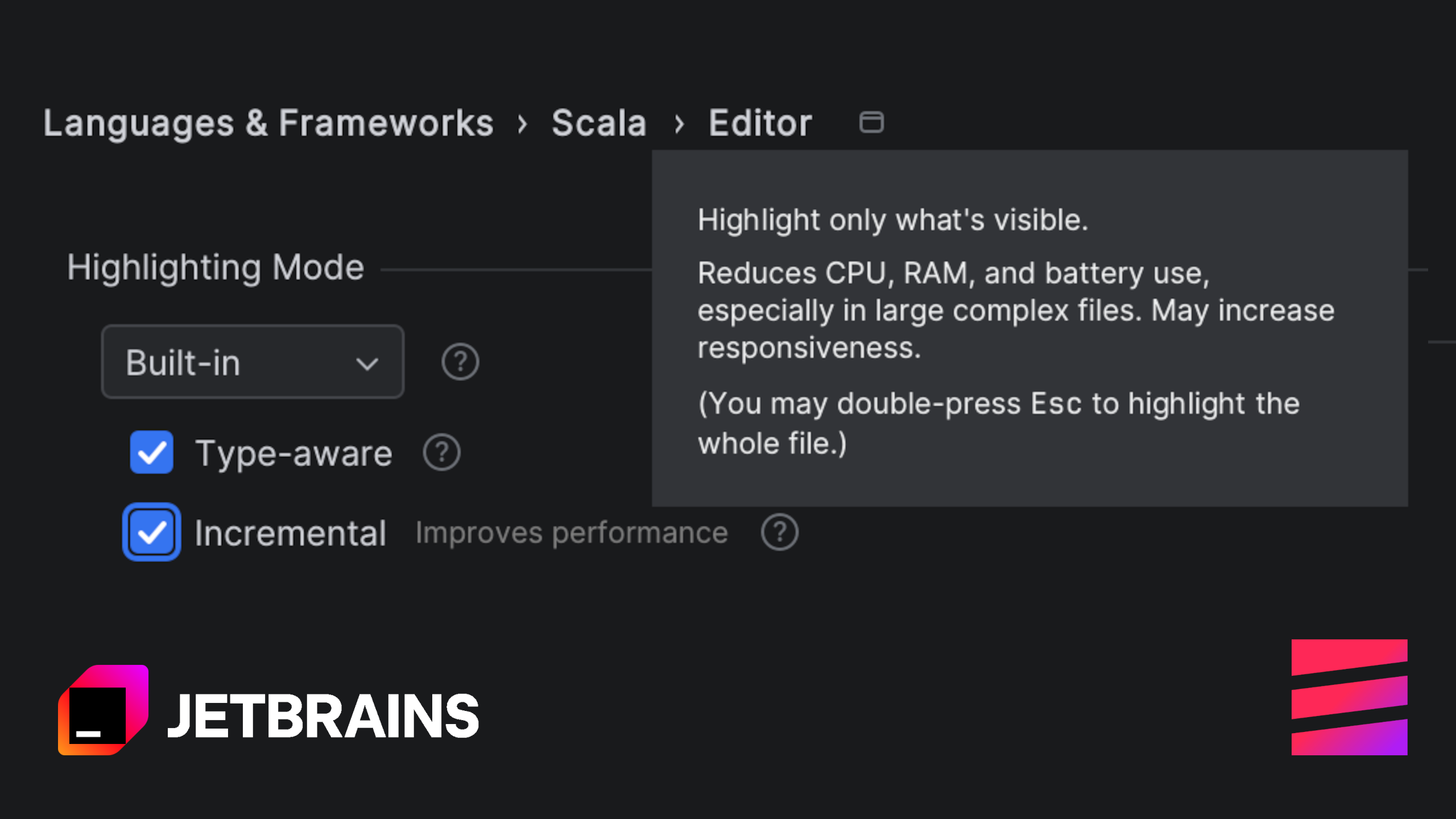

增量高亮

The JetBrains Blog

·