Radim Marek:Postgres中的HOT更新

Planet PostgreSQL

·

Ensuring PostgreSQL Backup Continuity: A pgBackRest Update

Percona Database Performance Blog

·

为什么开发者选择Postgres作为AI的数据库

The New Stack

·

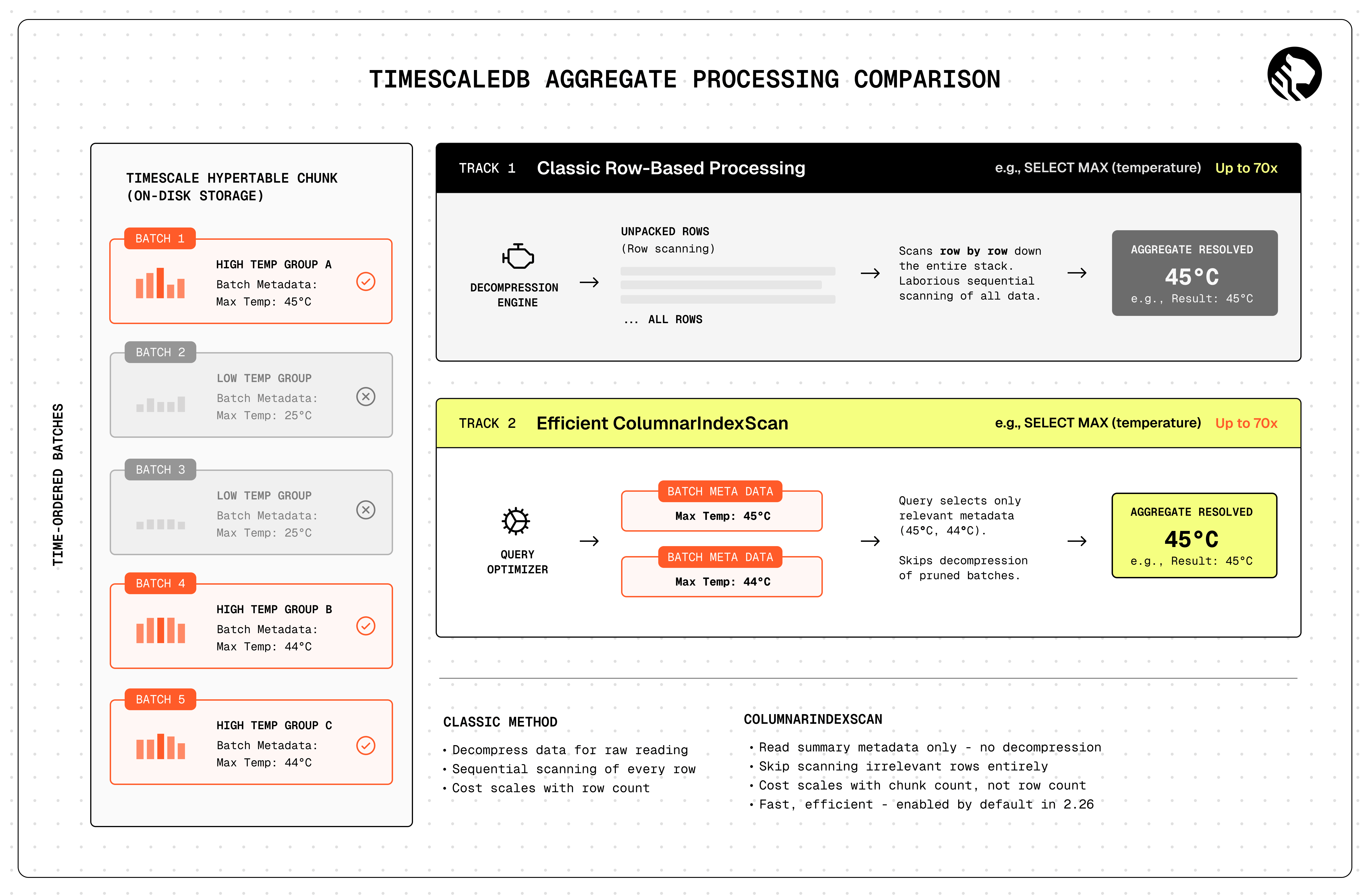

TimescaleDB 2.26:3.5倍更快的 time_bucket() 聚合,70倍更快的摘要查询,以及更快的多列查找

Timescale Blog

·

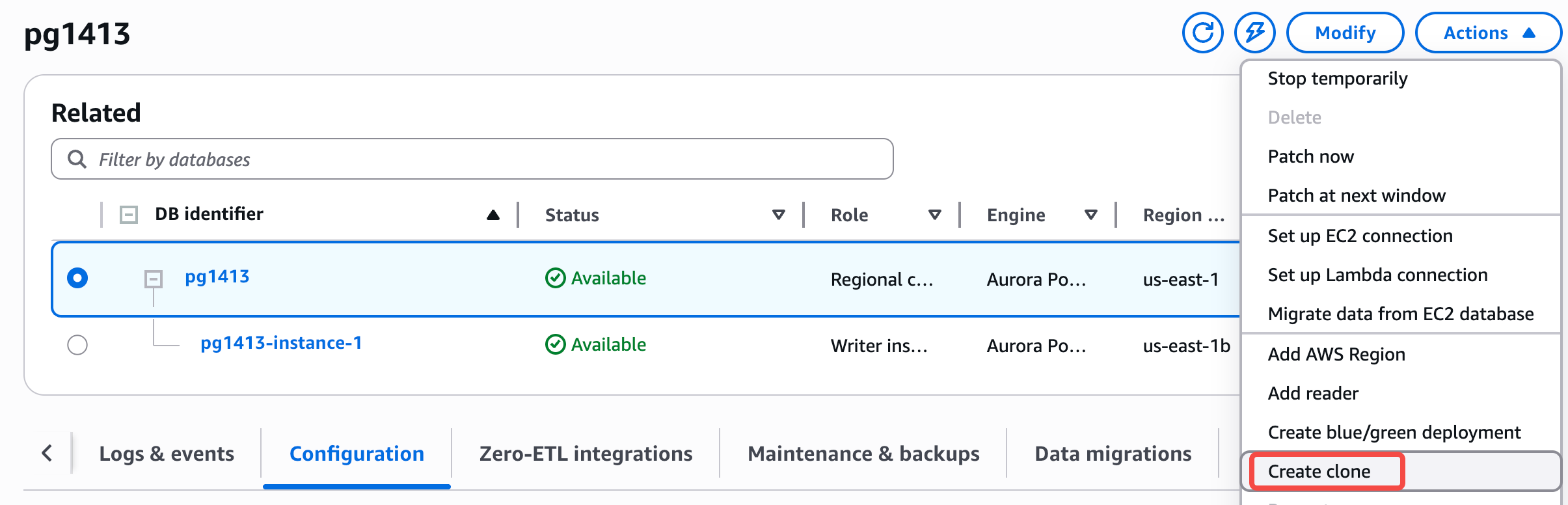

Aurora PostgreSQL 大版本升级指南

亚马逊AWS官方博客

·

安东尼·佩格:介绍AI DBA工作台:不仅仅是报告的PostgreSQL监控诊断工具

Planet PostgreSQL

·

如何将PostgreSQL用作缓存、任务队列和搜索引擎

freeCodeCamp.org

·

PostgreSQL 性能:您的查询是慢查询还是仅仅是长时间运行?

Percona Database Performance Blog

·

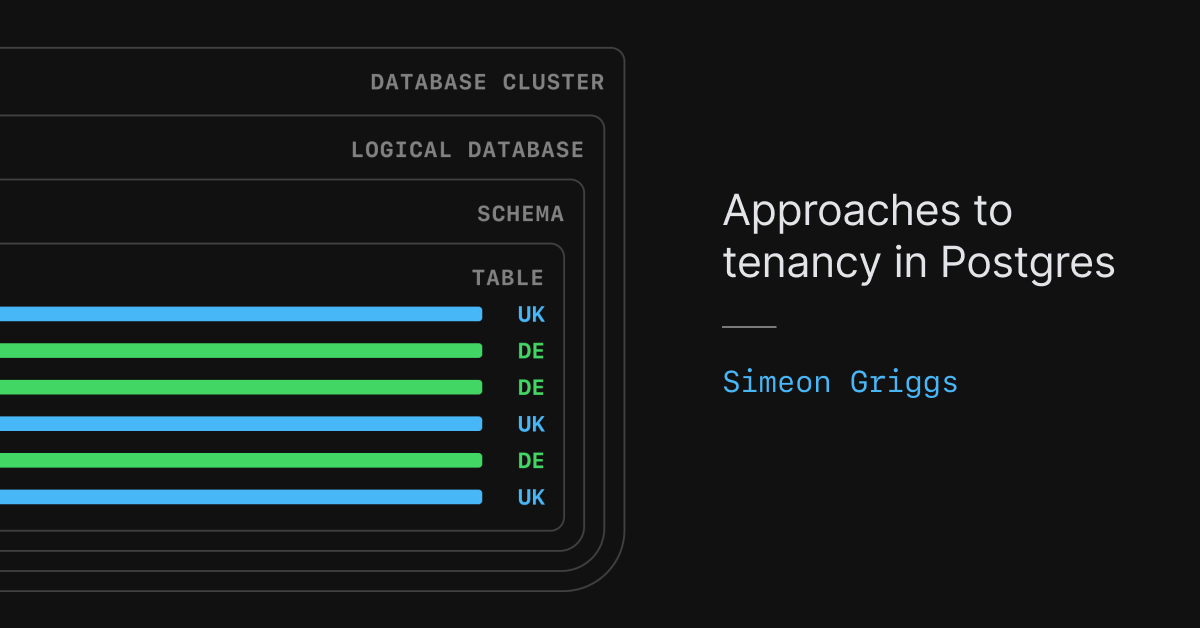

Postgres中的多租户架构方法

PlanetScale - Blog

·